Editor’s Note: Artificial intelligence (“AI”) promises to be a transformational technology… and it’s accelerating at an exponential rate. To show you just how fast AI is advancing, we’re sharing an insight from Jeff Brown, founder and CEO of Brownstone Research.

Prior to publishing investment research, Jeff was a technology executive for firms like Qualcomm, NXP Semiconductors, and Juniper Networks. In other words, he comes from the industry he now covers. And below, he shares one chart that shows why AI’s progress is almost scary.

Technology is advancing so quickly now…

And gaining a deep understanding of this acceleration can be difficult. It’s a hard concept to fully grasp.

Just saying that it is happening, which is what most do, doesn’t mean much.

There’s no way to contextualize what “fast” or “very fast” or “super-fast” actually means.

And that’s why I always try to provide context, data, and visualizations… so that we can grok the significance of this extraordinary period in time.

Exponential trends are particularly hard to quantify and contextualize.

Our human brains just aren’t wired that way.

It takes a conscious effort to think exponentially.

This is one of the areas that we specialize in at Brownstone Research.

Wherever there is exponential growth, you can bet we’re neck-deep in it – researching, providing our subscribers with the insights and investment recommendations to profit from these trends.

The underlying growth engine – currently fueling the exponential growth in technology and biotechnology today (which is now tech-driven) – is semiconductor technology and computational systems (servers, supercomputers, and hyperscale data centers).

If we don’t deeply understand this, we can’t possibly understand the trends.

The Truth About AI Compute

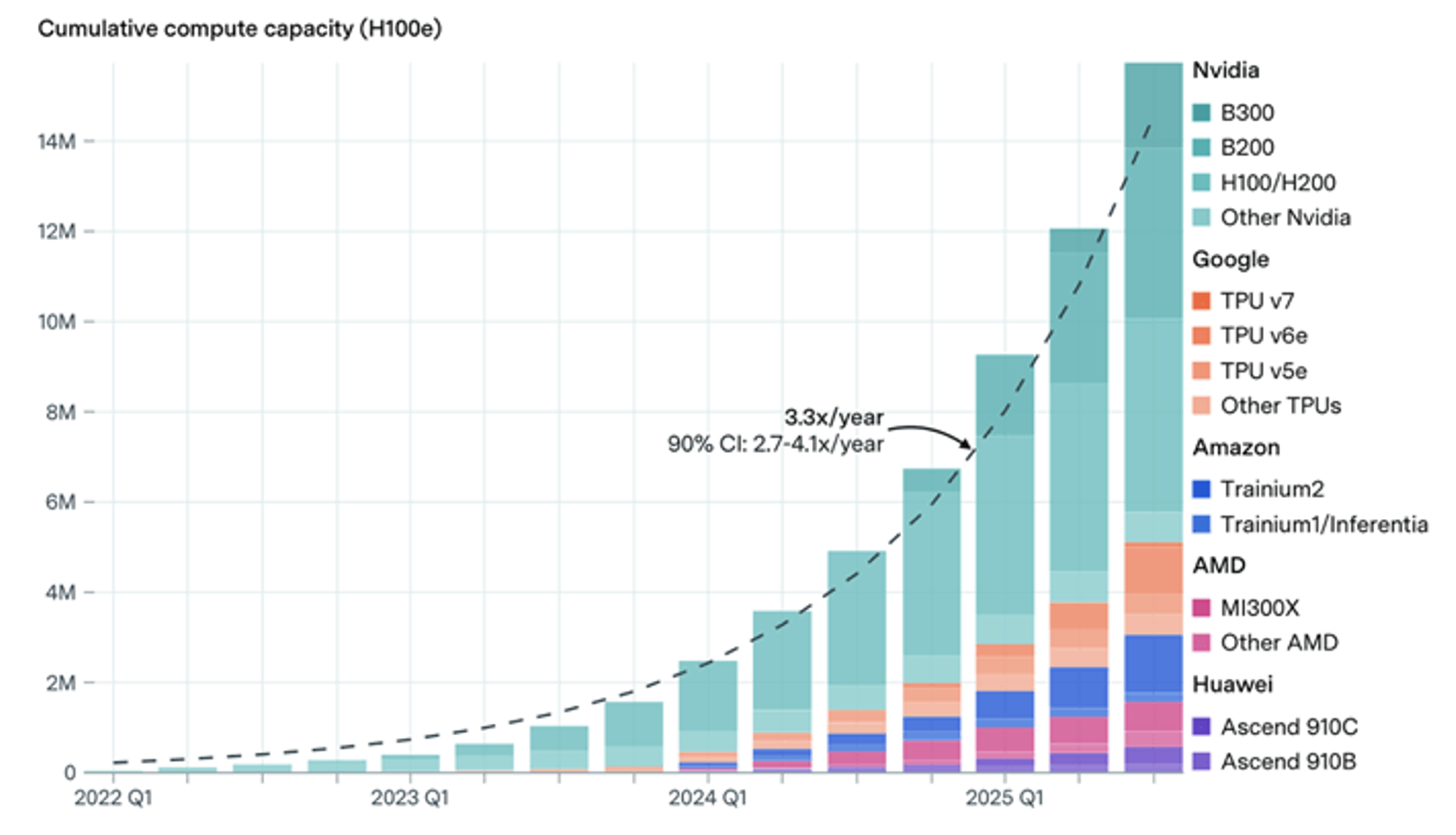

The chart below is a great visualization of what is happening right now.

It graphs the global artificial intelligence (“AI”) computing capacity since the first quarter of 2022.

And it shows how it has grown over time.

The chart has been normalized across five different companies’ semiconductor solutions, with a unit of compute being equivalent to one NVIDIA H100e graphics processing unit (“GPU”).

Source: Epoch AI

It’s easy to see the inflection point around early 2024, when the curve starts to go vertical.

Classic example of exponential growth.

In this case, it is the exponential growth of global AI computing capacity.

And this capacity is directly related to breakthroughs in artificial intelligence.

Every increase in computational capacity results in exponential growth in technological breakthroughs.

That’s why it is so hard to keep up with everything that is happening in tech/biotech.

And here’s the key point that should put a pit in your stomach – that feeling of both excitement and anxiety.

What the above chart shows us is that global AI computing capacity is doubling every seven months.

And that doesn’t truly capture the scope of what’s occurring. Actually, it’s now happening even faster than that.

The data in the chart above is only through the third quarter of 2025…

If I had to estimate, we’re now doubling capacity every six months.

And that window in which computing capacity is doubled will get even narrower before all is said and done. But here’s where the context is important…

Double the Capacity in Half the Time

Moore’s Law, which became the reference example for exponential growth, measured the change in the number of transistors fitting into one unit of space.

Moore’s Law accurately predicted a doubling every 18 to 24 months.

And along similar lines, we have seen related exponential growth in the computational power of semiconductors, and the exponential decline in the cost-per-unit of compute.

One year ago, global AI computing capacity was doubling at a pace of about every 10 to 12 months.

Think about that.

In just a year, the doubling in AI compute has gone from every 10 to 12 months… to roughly every six months.

And yes, by the end of the year, it will probably be happening every three or four months.

I can feel it in my gut right now. The implications…

And yet, that chart above doesn’t even paint a complete picture.

It only highlights five companies’ semiconductor platforms for AI:

-

NVIDIA (NVDA) with its GPUs, which make up about 60% of total compute

-

Google (GOOGL) with its tensor processing unit (“TPU”) semiconductors

-

Amazon (AMZN) with its Tranium semiconductors

-

AMD (AMD) with its GPUs and inference semiconductors

-

Huawei (China-based), with its Ascend semiconductors

Notably absent from this list is everyone else. So, what about the rest?

The Value of Inference

What about all of the other companies that are producing bleeding-edge semiconductors for AI applications?

Groq, for example, developed its Language Processing Unit (“LPU”) – optimized for high-performance inference – i.e., the running of AI applications. The company is not to be overlooked or “left out.”

NVIDIA’s GPU architectures are optimized for the training of large AI models, which makes them comparably inefficient for running AI applications.

This was the impetus for Groq’s design in the first place.

Groq’s LPUs have very low latency without sacrificing accuracy when running these AI applications.

And now we know how much Groq’s technology is worth.

On Christmas Eve last year, NVIDIA announced that it was “acquiring” Groq for $20 billion.

The deal was structured as a $20 billion perpetual licensing agreement, combined with NVIDIA taking the most valuable Groq executives and employees as part of the “licensing deal.”

NVIDIA would have never gotten away with an outright acquisition – antitrust regulations would never allow for it – which is why it structured the deal the way that it did.

It simply left Groq as a shell of a company, offering cloud-based services using Groq’s LPUs.

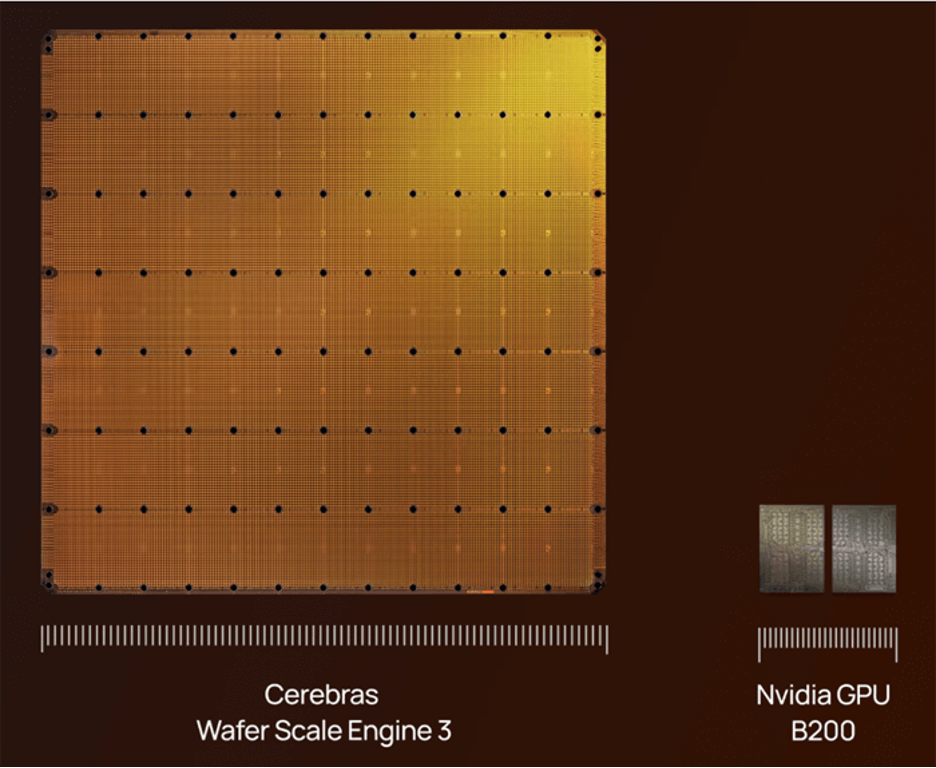

Or how about Cerebras and its Wafer Scale Engine (WSE-3)? It designed the largest semiconductor in the industry, utilizing an entire silicon wafer.

Source: Cerebras Wafer Scale Engine 3 | Source: Cerebras

Cerebras took a different design approach than Groq, but its target was the same… low-latency inference applications. And unlike Groq, Cerebras has been gearing up for an IPO, which I believe will absolutely happen this year.

That is, unless another company steps up and acquires it first.

Ripe for Acquisition

SambaNova Systems is another major player in the AI inference industry, with its Reconfigurable Data Unit (“RDU”) designs.

While SambaNova also targets inference applications, it took a unique approach with its memory architecture, combining on-chip static random-access memory (“SRAM”) with high-bandwidth memory (“HBM”) and high-capacity double data rate (“DDR”) memory.

This gives SambaNova the ability to run multiple AI models in memory and switch from one to another in microseconds.

Source: SambaNova SN40L RDU | Source: SambaNova

SambaNova attracted the interest of Intel, which has been in advanced negotiations to acquire SambaNova for $1.6 billion, a price I believe is far too low for what SambaNova has built.

And we’re just scratching the surface with these three companies alone.

There is also Tenstorrent, d-Matrix, and potentially the most interesting of them all – Neurophos – with its optical processing unit (“OPU”), bringing record-shattering energy efficiency and exaflop performance in a single server.

The key point here is that a lot more computational resources are being brought online outside of the big five players shown in the original chart.

And the pipeline of private semiconductors coming to market with optimized chips for one AI application or another is full of incredible potential.

Some will go public, many will be acquired, and all of them will accelerate the development and utilization of artificial intelligence.

Regards,

Jeff Brown

Founder, Brownstone Research

Editor’s Note: Something is quietly going wrong with some of America’s favorite AI companies… and most people have no idea.

That’s why Jeff is sitting down next Wednesday with investing legend Marc Chaikin to discuss what they are calling the “Dark Chip” crisis.

It’s a looming breakdown tied to a structural flaw embedded in the AI buildout. And it could leave millions of AI chips rotting in warehouses while the companies that depend on them run into a brick wall.

But this dark prediction has a silver lining with the potential to 12 times early investors’ money… for those who know what’s coming and are prepared.

Marc and Jeff are getting into all the details on April 29 at 8 p.m. ET. If you want to learn more about the Dark Chip crisis and how they are positioning to handle it, you can go here to automatically add your name to their guest list.

|